Design rules on the human side

to avoid failing with AI

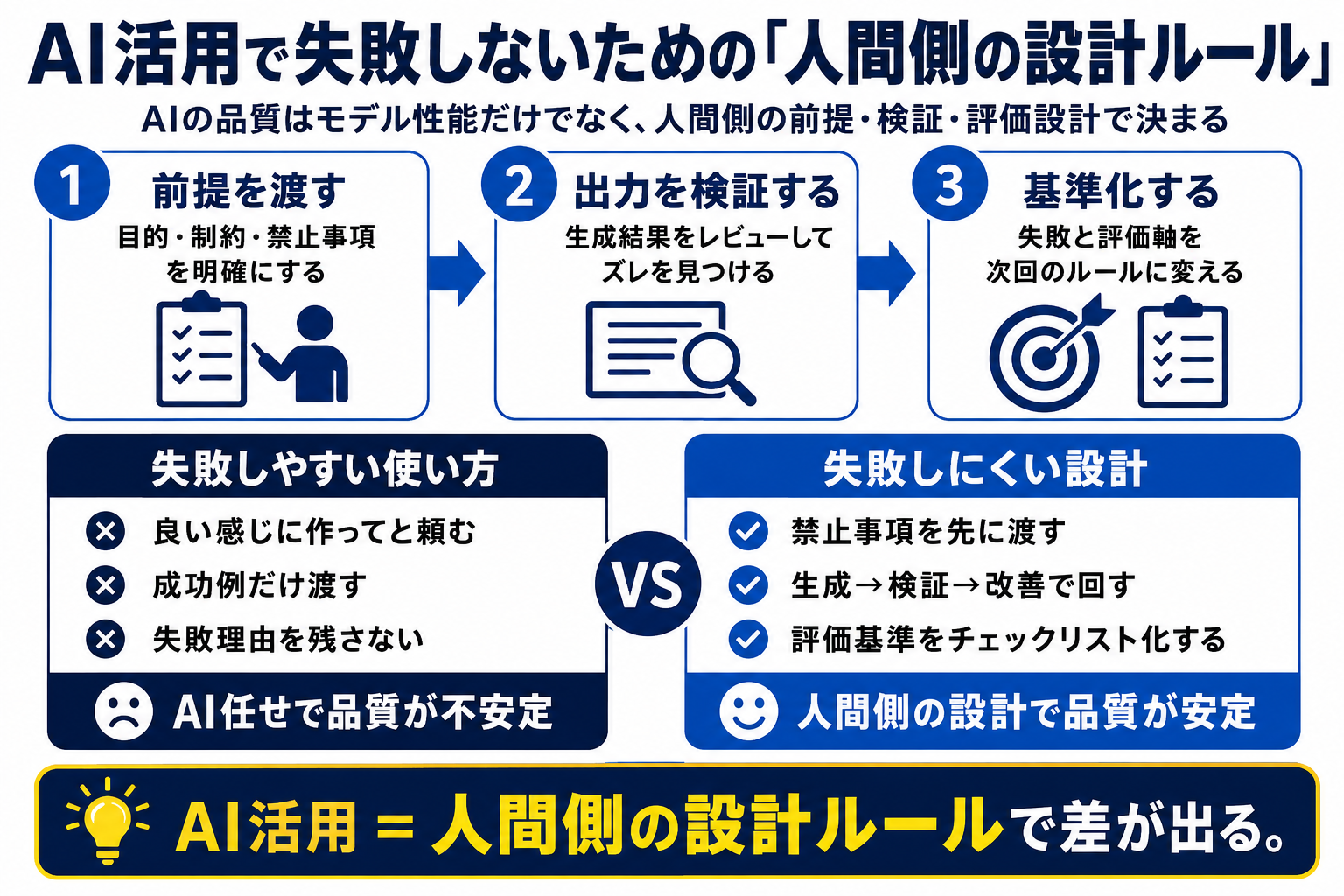

AI is smart. But using a smart AI does not automatically produce good work. Output quality is shaped by how people provide context, prevent failure, evaluate results, and verify what was produced.

AI quality is decided not only by how smart the model is, but by how well humans design against failure.

LLM failures are more predictable than they look

AI mistakes do not occur as pure randomness every time. Unrequested features, broken existing behavior, vague naming, unsupported claims, and weak verification appear repeatedly. Once those patterns are named, they can be prevented.

Generation speed and quality assurance are different things

AI can speed up work. That does not mean it guarantees correctness. Surveys, code, articles, and analysis reports all need human review of purpose, assumptions, omissions, and bias.

Each failure should become the next instruction

A useful AI workflow keeps both good outputs and bad outputs. Bad outputs are not only errors; they are materials for improving prompts, checklists, review points, and acceptance criteria.

Using AI well means externalizing judgment criteria

Stable AI use requires people to state what they value, what they avoid, which format is acceptable, and where the quality line sits. The more you delegate to AI, the more clearly human standards must be written down.